Why Website Performance Metrics Don’t Tell the Whole Story

Posted in: Search Engine Optimization · Website

Posted by: Corey Smith on May 4, 2026 at 11:22 am

When your website feels fast, visitors stay longer, explore more pages, and are far more likely to convert into leads or customers. Slow experiences drive people away before they ever see your offer. On the search side, Google has made clear that real‑world user experience signals—especially Core Web Vitals—play a role in rankings and how answer engines surface your content. AEO (Answer Engine Optimization) rewards pages that deliver quick, stable experiences because those are the ones people actually engage with. Lab scores alone do not decide your fate, but ignoring the gap between the numbers and the lived experience can quietly cost you both traffic and trust.

I wanted to explore this more so I used Smithworks' website as a bit of a test bed. I explored the idea that lab numbers are noisy and often disagree with each other, why chasing a perfect synthetic score almost always means giving something up, and how we made concrete improvements while keeping the features our marketing stack actually needs.

As you read through, you'll likely find a number of terms that are new. I've included a glossary at the end of my post to help answer those questions.

How PageSpeed Insights and Lighthouse actually work (and why the same site can score differently minutes apart)

PageSpeed Insights and Lighthouse are lab tools that run in a controlled, emulated environment rather than on a real visitor’s device. The tool loads your page with a simulated mobile device, throttled network, and slowed‑down CPU so every test is repeatable and comparable across sites. It is not pulling data from a physical phone sitting in Google’s data center; it applies a standardized stress profile designed to expose bottlenecks consistently.

Even though the environment is controlled, you will still see noticeably different results from run to run. Slight variations in server response times, third-party script loading order, network micro-fluctuations, and exact JavaScript execution timing still occur; even under the same standardized conditions. This is completely normal and expected. That is why we recommend running multiple tests and using the median rather than relying on any single run.

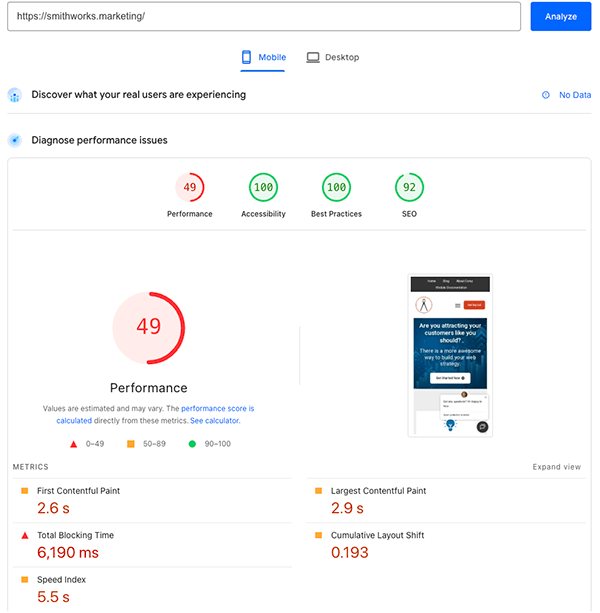

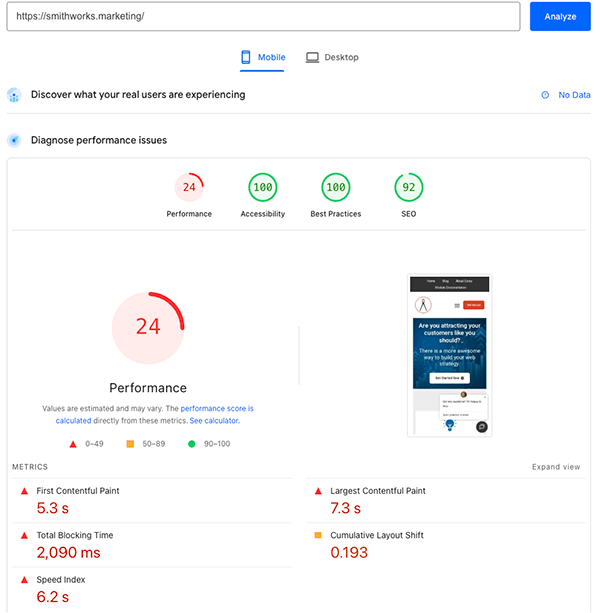

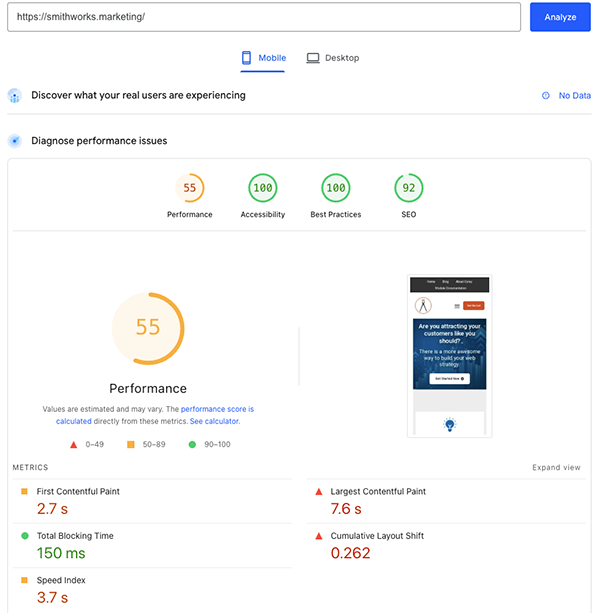

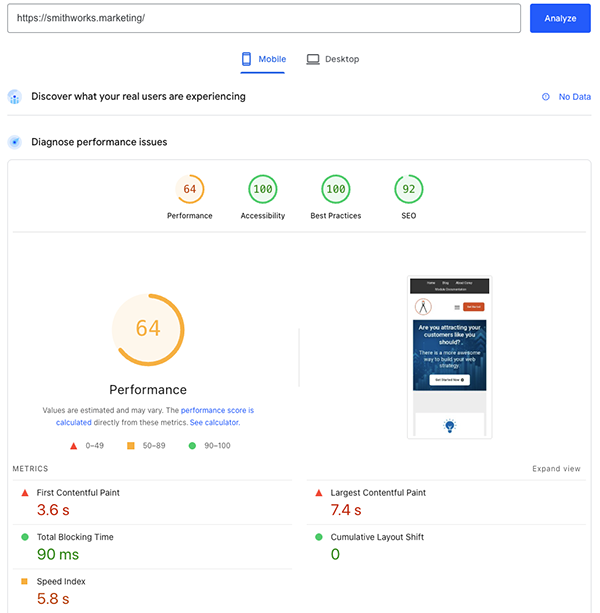

These following screenshots illustrate the point perfectly. They were all run on the homepage under the same conditions yet the performance numbers moved around dramatically. That variance is expected, not a sign you are doing it wrong. The lab environment is intentionally harsh so it can surface render‑blocking CSS, heavy images, third‑party scripts, and layout shifts every single time.

Before ChatBot Removal

The following are two tests run with a ChatBot installed on my site. They were run within a few moment of each other.

After ChatBot Removal

I wanted to see how the ChatBot affected the performance metric. After I removed the ChatBot from my website, I ran these two tests again within a few minutes of each other, and these are the results.

It's a perfect example because the best of the before ChatBot removal vs the worst of the after ChatBot removal shows negligible change but the worst of the before ChatBot removal versus the best of the after ChatBot removal show amazing improvement. If we just take one run at face value, we actually can't paint a realistic picture of what we are improving.

The pros and cons of lab tests like PageSpeed Insights and Lighthouse

Lab tools shine when you treat them as diagnostic instruments rather than final report cards. Their biggest strength is repeatability. Because they use the same emulated device, throttled network, and cold‑load conditions, you can run before‑and‑after tests and trust that any improvement you see is real. They reliably flag render‑blocking resources, unused JavaScript, font chains, and layout‑shift culprits so you know exactly where to focus next. Just remember that because of many variations in the nature of web, they are, at best, relatively consistent.

The downside is that lab results do not perfectly predict how a human on fiber or modern hardware will experience the site. A visitor with fast broadband and a newer device will often beat the lab numbers handily, while someone on weaker hardware or high latency may see worse performance than the model predicts. The tool deliberately picks one harsh‑but‑stable scenario to keep comparisons fair across the entire web, not to mirror every possible user connection.

One of the clearest ways to see the difference between lab conditions and real-world use is by comparing the same URL under mobile and desktop profiles in PageSpeed Insights. Mobile applies much heavier throttling, which is why the Performance score drops even when the page markup is identical.

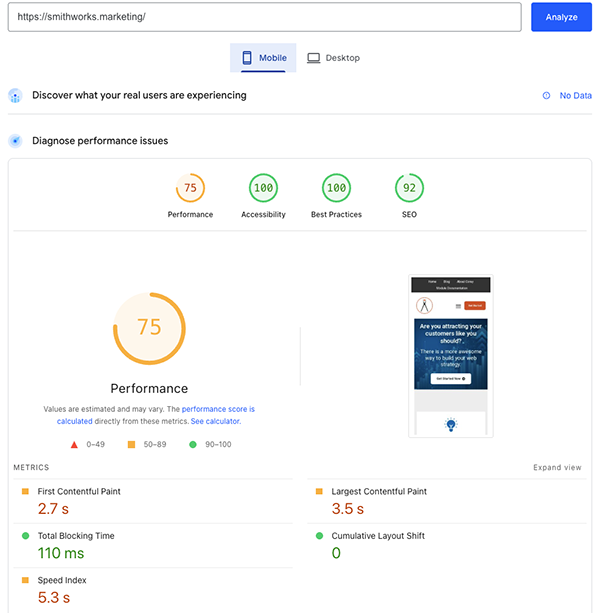

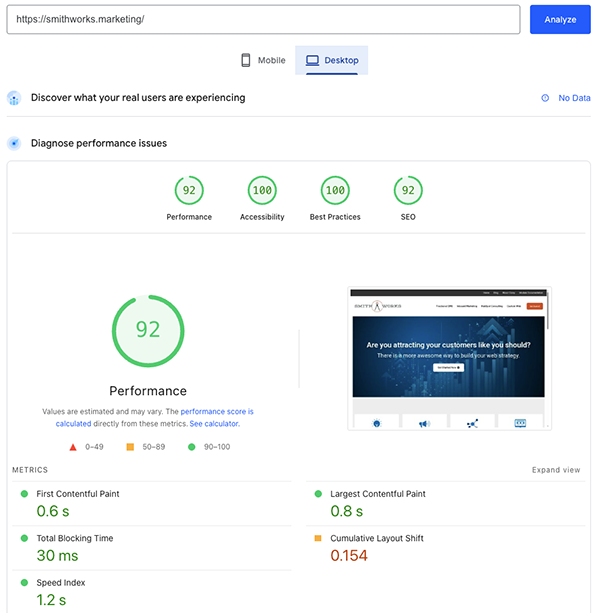

After I completed the optimization I wanted to complete, this was the resulting comparison of Mobile vs Desktop. Same site, same test, different results based on the platform they test against.

| Mobile | Desktop | |

|---|---|---|

| Performance (0–100) | 68 (needs improvement) | 92 (good) |

| LCP | 7.1 s | 0.8 s |

| FCP | 2.7 s | 0.6 s |

| Speed Index | 5.3 s | 1.2 s |

| TBT | 110 ms | 30 ms |

| CLS | 0 | 0.154 (needs improvement on that run) |

| Accessibility | 100 | 100 |

| Best Practices | 100 | 100 |

| SEO | 92 | 92 |

Performance is always a negotiation—you have to decide what you’re willing to give up

Every capability you add to a marketing site competes for bytes, main‑thread time, and layout stability. On our website the cost stack showed up repeatedly in our Lighthouse and PageSpeed Insights runs.

| What you might want | What the lab often charges you |

|---|---|

| Large hero imagery (full-bleed, high resolution, multiple breakpoints) | LCP weight and decode cost; Speed Index paints later unless you aggressively size and prioritize the real LCP candidate. |

| Video (background, embed, HubSpot video) | Extra bytes, poster images, and sometimes main-thread work depending on how it is loaded. |

| JavaScript-heavy features | Total Blocking Time, long tasks—HubSpot visitor / bundle code, GTM, reCAPTCHA, web interactives, etc. |

| Live chat / Conversations | Main thread, network, and sometimes CLS dominated by the chat widget. |

| Analytics and tag managers | Unused JS warnings and attribution work. |

| HubSpot “collect non-HubSpot forms” | Additional scripts and traffic that add visible lab cost. |

| Brand typography + icon fonts | Render-blocking CSS chains and font-display nags. |

| Preconnect hints | Warnings when more than four are used or when they are unused. |

We see the real trade-offs of little decisions we make observed during our testing. None of these items are inherently bad. They are deliberate business choices. If you want live chat during business hours, full GA4 and GTM tracking, reCAPTCHA protection, rich hero imagery, and HubSpot form intelligence, you should expect the lab scores to reflect that weight. The honest conversation is about which concessions you are willing to make and which non‑negotiables stay in place. Performance metrics will be reduced when you keep those capabilities, and that is a trade‑off worth making when the features drive real leads and customer experience.

Just a little side note. The same problems could and do existing in any website. This just focuses on HubSpot because this website is built in HubSpot. If the site is built in Wordpress, Drupal, or if HTML only, there is a certain amount of technical overhead you have to decide is important enough to keep.

What the final decisions on this site actually showed

We took the big hero image at the top of the page very seriously. We added special code so the website shows a smaller, faster-loading picture on phones and tablets, and a larger one on big computer screens. We also made a bunch of other behind-the-scenes improvements, removed some fancy animations, cleaned up the JavaScript code, and even deleted the code for the HubSpot chat widget because we decided not to use it anymore.

That was just part of what we did.

Even after all those changes, one set of tests still showed the mobile loading time jumping around — sometimes around 13 seconds and other times around 6 seconds. We knew this was normal variation, not a sign that our improvements failed. The same page had shown big swings like this before. This is the kind of lab honesty we want every business owner to understand.

The site still feels fast to real visitors on normal home Wi-Fi or office internet. That’s because most people aren’t using the super-slow, stressed-out conditions that the testing tool uses. Lab tools test the worst-case scenario. Real users and real-world data show whether your site actually feels good to the people who matter.

The Final Results

But, is a lower lab score bad for SEO?

You might have noticed that my SEO result was consistently at 92 while my Accessibility and Best Practices were consistently at 100. My technical work and tests only affect that performance number.

You might rightly ask, if performance is low then do we have a negative impact on SEO?

The right answers is, "Maybe but probably not as much as you'd guess."

There is no clean mapping like: “Lighthouse Performance = 58 ⇒ rankings −X.” Public guidance from Google has emphasized real-world experience and Core Web Vitals in field data where available but have not provided a single lab trophy number. Google wants to provide the best experience and content to it's users. So, yes, we want a site to be faster but we still have to be willing to accept some trade in performance for user experience.

And, back to my 92 in SEO versus a perfect 100... this post has been about trade offs in performance. The reality is, there may be other tradeoffs that you have to decide to make depending on the experience you want. I have strategically chosen a few trade-offs in SEO. Nothing is ever absolute (though I do think there is no excuse for Accessibility and Best Practices to be at 100).

Choosing the right trade-offs for your business

Lab results will disagree with each other and with how the site feels sometimes. That is okay. What matters is optimizing inside the rails of your actual business requirements while using the tools exactly as intended—to surface the next best improvement and expose the real trade‑offs. We are not optimizing for a screenshot of a score. We are optimizing for your visitors, your compliance and analytics requirements, and the experience signals search engines weight in the real world.

Our screenshot examples above, along with the concrete before-and-after data from our own testing on this website, show that performance work is both technical and strategic. You get to decide which capabilities stay and which levers you are willing to pull. When you approach it with that mindset, the numbers become useful instead of stressful.

Glossary of Key Performance Terms

PageSpeed Insights (PSI)

Google’s free tool that runs Lighthouse audits to measure website performance. It provides both lab data and real-user field data (when available) and is one of the most widely used performance testing tools.

Lighthouse

Google’s open-source auditing tool that evaluates performance, accessibility, best practices, and SEO. It powers PageSpeed Insights and helps identify issues with Core Web Vitals and render-blocking resources.

Lab Tests (Lab Data)

Controlled, synthetic performance tests that simulate a throttled mobile device, slow network, and reduced CPU. They are repeatable and excellent for diagnosing issues, but they don’t always match real user experience.

Field Data / Real-User Data (CrUX)

Actual performance metrics collected from real Chrome users visiting your site. This data reflects genuine visitor experiences and carries more weight for SEO and Core Web Vitals ranking signals.

Largest Contentful Paint (LCP)

A Core Web Vital metric that measures loading performance. It tracks how quickly the largest image or text block becomes visible. Aim for under 2.5 seconds for good performance.

First Contentful Paint (FCP)

The time it takes for the first piece of content (text or image) to appear on the screen. Faster FCP contributes to a better perceived loading speed.

Speed Index

A performance metric that shows how quickly a page’s visible content is populated during load. Lower numbers mean the page feels faster to users.

Total Blocking Time (TBT)

The total time the main thread is blocked by long tasks, preventing the page from responding to user input. Lower TBT creates a smoother, more responsive experience.

Cumulative Layout Shift (CLS)

A Core Web Vital that measures visual stability. It tracks unexpected layout shifts while the page loads. A good score is under 0.1 for a stable, frustration-free experience.

Core Web Vitals

Google’s key user experience metrics (LCP, CLS, and Interaction to Next Paint) used as ranking signals in search and answer engines. Strong Core Web Vitals improve both SEO and AEO performance.

Render-blocking Resources

CSS or JavaScript files that stop the browser from rendering the page until they finish loading. Optimizing these is one of the most effective ways to improve speed.

Preconnect Hints

HTML instructions that tell the browser to establish early connections to important third-party domains. Used properly, they speed up loading of fonts, scripts, and analytics.

HubSpot Collected Forms

A HubSpot feature that auto-captures submissions from non-HubSpot forms. While useful for lead generation, it adds extra JavaScript that can increase Total Blocking Time and hurt lab scores.

Answer Engine Optimization (AEO)

Optimizing your website and content so it performs well in AI-powered answer engines and featured snippets, in addition to traditional search results. Fast, stable experiences are critical for AEO success.

About Corey Smith

Ready to simplify and succeed? Let’s make it happen—because your business deserves practical, no-nonsense wins. Find me on LinkedIn.