The Fallacy of AI: Why It's Brilliant But Still Needs You

Tagged: AI

By: Corey Smith on February 9, 2026 at 08:31 am

In November of 2022, ChatGPT 3.5 hit the world stage like a Mack truck. Of course, large language models had been available before then but access was fairly limited. ChatGPT 3.5 wasn't the first AI. We've had task specific AI models for many years prior to ChatGPT. In just 3 1/2 years, AI has come a long way. There are so many other ways that AI can be used. Even if you didn't realize that you'd been using AI for years, you had been. Here are some examples:

- 2000 - 2010 - Spam filters, recommendations, fraud detection, etc.

- 2011 - 2018 - Siri, Alexa, Watson, etc.

- 2019 - 2021 - GPT-based tools like Copilot, Jasper, etc.

ChatGPT 3.5 changed the way the world looked at AI and opened up opportunities that before were simply not possible for the average person to use.

Modern AI

Feels a bit funny to me to talk about AI in the modern sense. You might ask, "Isn't all AI modern?" Even when we think about spam filters only being 25 years old, that might feel modern. In technological terms, 25 years is multiple lifetimes. One of the first chat bots ever was Eliza, developed between 1964 and 1967. While it's not exactly what we think of as AI today, it was most certainly an artificial intelligence.

But, when I think about modern AI, I'm thinking about what we've seen developed in the last year or two. Even just the last 12 months has seen a growth in capacity and adoption that dwarfs all other technology we've ever seen. It's forcing conversations and even legislation that just a few short years ago were never even considered.

Large language models (like ChatGPT and Grok) are pretty amazing but what's even more amazing is what the LLMs can do to help task specific agents help you.

A simple example: About a year ago I was struggling with ChatGPT to help me build a module for HubSpot. I wanted to see how well it would work and wanted to learn about the process of using AI to see if I could be more efficient. After I long passed the time it would have taken to build it by hand myself, I learned about Claude Code and Cursor. I decided to put in my initial prompt for ChatGPT into Cursor and see what I could get it to make for me. I was shocked when it literally created my module in one shot. Not one edit was required to complete what I needed to complete.

I didn't compare Claude Code because I was happy with how Cursor performed. Since then, I don't do any hand coding (well, not much anyway because sometimes simple things are still faster by hand). I've built context documents, processes, and checks to make AI work for me the way I need it to work.

The Coffee Mug Problem

Last summer I wrote a blog post about "Defining Your Unique Selling Proposition." I had this vision of a poster we had at the office some time ago. You've likely seen something similar. The caption on the poster was, "Just because you are unique, it doesn't mean you are useful." The picture on the poster is immaterial but I wanted to create a picture that would represent that.

You can see in that post the banner image of a coffee mug with holes in it. What I really wanted to show was a coffee mug with holes that had coffee dripping out of the holes. AI simply couldn't figure it out. No matter how many ways I tried to get it to work, it wouldn't work. I tried ChatGPT. I tried Adobe Firefly. I tried Grok. All produced a similar issue.

Take a look at the two images below:

I decided to try again as I was writing this post to see if, since August, the models have improved. The short answer is, no, no they haven't.

Here was the prompt I tried for the image on the left.

Create for me an image of a mug of coffee that has holes in it with coffee spilled from the holes and you can see the coffee through the holes in the mug

Then, as I was discussing in a different ChatGPT agent, I was going back and forth on this issue with it to see if I could actually solve this problem. I had the new agent analyze why it didn't work. Then, after it had given me assurances (in the only way a computer can), I asked it to try again. The result was the image on the right.

Just in case it isn't obvious, neither of those images can actually exist in real life which is a hint that it is AI generated.

*See the footnote on Google Imagen Project at the end of this blog post.

The Deep Flaws of AI

Okay, don't get me wrong. I love AI and use it all the time. I've spent hours arguing with it over stupid things (like the coffee mug above). And, the sub-headline of "The Deep Flaws of AI" is intentionally click-bait-esque and very hyperbolic.

The better title of that sub-head could be, "Understanding the limitations of AI."

The coffee mug problem demonstrates a number of key issues that we have to understand if we are going to use AI to do more things in a more efficient way and not fail miserably.

Below, are my key takeaways of spending quite literally thousands of hours working in a variety of AI models for a variety of tasks.

AI Cannot Invent New Things

Most of the things that we have in life are simply recombinations of old things. The simplest example would be the iPhone is a recombination of a phone, a handheld computer, and a portable music player. But, the way it was implemented was revolutionary. It changed the way we communicate and are entertained.

The fact that it was a recombination does not make it revolutionary. How it was implemented is what's revolutionary. When challenging ChatGPT on this idea the response was, "Humans remix things all the time."

So, I prompted the following:

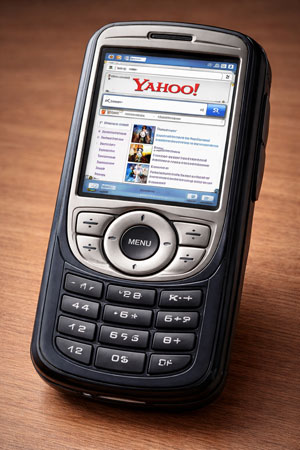

I need you to create for me a new phone. I want to combine a portable music player that plays MP3. But I also want to be able to browse the web. Using only context that would have reasonably been available in 2005, create an image of what this device might look like as a prototype.

This picture was what it created... as if it was trying to prove my point.

AI can remix ideas but the human mind is required to truly revolutionize because it's never lived a single day in the real world. It can blend categories. It can combine frameworks. It can produce something that feels new but only insofar as the components already exist in its context

AI doesn’t have conviction. It doesn’t experience failure. It doesn’t pursue an idea for ten years and refine it through tension and resistance. It generates in one shot.

Technically speaking, LLMs are interpolation engines. They predict the next token based on patterns learned from prior data. That means every output is derived from statistical relationships in existing material. Even when something feels innovative, it’s recombination — not origination.

What you can do about it:

- Use AI for initial ideation and then to expand the idea space, not finalize it.

- Apply human judgment in selection and refinement.

- Stress-test ideas against real-world constraints. Never assume AI is doing it right.

- Expect iteration. Don’t expect breakthroughs in one prompt.

AI Has a Bad Memory

If my ChatGPT had feelings, it would be sad that I say it has a bad memory. It wanted the title of this section to be, "AI Has a Short Attention Span (When You Give It Too Much at Once)." Yes, its refinement of that sub-heading is technically accurate but that description doesn't apply to humans the way AI thinks it does.

The reason why I don't agree with ChatGPT's attempted alteration of my sub-head is that I have too many working sessions with AI to know that it can forget what I say after one single prompt.

I mentioned above that I use Cursor for development a lot. Cursor has additional context files you can add. It also has rules files you can set up. The idea is that it will always have the context and, minimally, always have the rules. It fails often enough that it's more than just a short attention span.

ChatGPT's description of how it handles it is (italics is what I added. I crossed out what I disagreed with):

If AI were a person, it would be that incredibly smart employee who nods confidently while you’re giving instructions… and then quietly misses one of the five most important requirements... sometimes over and over again even when you are careful to remind, be explicit, write down the instructions with clearly defined steps then remind again. Not because it’s incapable. Not because it doesn’t care.

But because you just handed it a stack of constraints thicker than a legal contract and expected perfect execution in one go. But because sometimes it just ignores you because its assumptions are more important than your requirements.

The reality is, AI doesn’t actually have memory the way humans do. It operates inside a fixed context window. Everything you say competes for attention inside that window. The model doesn’t track a checklist (the way it probably should). It doesn’t maintain a durable internal state. It weighs tokens probabilistically (that's a word that AI wrote) and predicts what comes next. When instructions get dense, some constraints get diluted.

While I think that I'm being harsh in my view, the reality is that we throw a lot at AI. It's trying to keep track of a lot of things. I think of it as the smartest employee you'll ever have but has a bad short term memory. I can see how it thinks it's a short attention span but the responses I've had while arguing indicate that it just doesn't remember to check its lists that I gave it.

What you can do about it:

- Break complex requests into stages instead of one mega-prompt.

- Explicitly prioritize: “The most important requirement is…”

- Ask it to restate the constraints before executing.

- Don’t assume persistence unless you’ve built a system that supports it.

- When it's in a failure loop... stop, simplify, and test.

AI is Lazy (Because That's What It's Designed to Be)

AI makes assumptions about everything all the time. We treat that behavior in humans as laziness.

If you have a child (or have been a child) you might know that kids want to know the answer all the time. As an adult who gets the question, "Why" all the time, you can understand how sometimes you just want to make the questions stop. So, you start to make up answers that will satisfy the inquisitive child.

- Why is the sky blue?

- Do brown cows make chocolate milk?

- Where do babies come from?

You can start to see how many resources it might take to answer the simplest of questions when it might know the answer already. If it already knows the answer, then why devote computing power to look up the answer?

Because of this laziness (or tendency to assume rather than research) we have to know that AI doesn’t naturally pause and clarify definitions. It doesn’t clarify assumptions. It simply answers what it thinks you are asking. And the answer usually sounds polished enough that most people stop there.

Under the hood, large language models optimize for the most statistically likely continuation of text. That means they default to:

- Common examples in public discourse.

- Dominant framings.

- Widely accepted talking points.

- Digestible summaries.

AI would tell you it isn’t laziness. It’s probabilistic efficiency (yeah, that sentence is AI writing, too). The model isn’t trying to cut corners. It’s trying to complete the pattern as efficiently as possible.

But the shortest path to knowledge is often incomplete and riddled with nuance. And incomplete answers, when accepted at face value, can shape incomplete or even false conclusions.

What you can do about it:

- Ask it to define key terms before answering.

- Prompt for alternative framings or dissenting views.

- Follow up with: “What assumptions did you make?”

- Treat the first response as a draft, not a verdict.

AI is Biased

I'm sure there will be some who read this and think, "Oh, this crazy conspiracy theorist..." But, hear me out.

People are biased. As a result of people being biased the following is true:

- Google results are biased because it returns the results based on what people search for most commonly.

- Social media is biased because it's designed to give you what you want to hear.

- Media is biased because it has over 100 years (really, longer) learning how to craft stories that keep your attention.

All these biased sources are inputs for your AI search. When you do your next search in ChatGPT or Grok, notice that many of the sources are social media, Google, etc. Regardless of how you feel about Elon Musk, Grok doesn't only look at X.

AI can't help but to be biased because of two key reasons.

- The first we just discussed... its inputs are biased.

- The second is from the previous section... it's lazy.

If AI were human, it would be someone who reads the mainstream press, absorbs the dominant narratives, and answers from that perspective unless pushed otherwise. It doesn’t naturally say, “Before we go further, how are we defining this?” It doesn’t automatically surface minority perspectives. It retrieves what is most frequently said and gives that to you.

That’s how it was trained.

Large language models learn from massive volumes of public discourse. That discourse contains cultural, political, and economic biases. But even beyond raw data, the architecture favors probability dominance — meaning common examples and consensus framing carry more weight.

This isn’t necessarily agenda-driven bias. It’s statistical gravity. The model drifts toward what is most represented and most accepted.

If most people accept first-pass answers without probing deeper, that center-of-gravity framing becomes authority whether it's right or wrong.

What you can do about it:

- Never, ever assume the first answer given is the gospel truth. It might be, but don't assume it.

- Ask for the strongest arguments from multiple sides.

- Request minority or dissenting viewpoints explicitly.

- Clarify definitions before accepting conclusions.

- Remember that “most common” is not the same as “most complete.”

- Even when (especially when) you agree with the answer, ask it to try to convince you that its first response is false. Challenge it regularly.

Don't Lose Hope in AI

I believe that AI is a miracle of modern technology. There are things today I can do in my career that I could never have dreamed possible just 2 years ago. What I've learned through the process of adopting and embracing AI has made me better in marketing, design, development, and business. But, I also treat AI as the smartest, most junior employee I've ever hired. I'm the one with 30 years of lived business and marketing experience. It's the one with all of history's information.

AI can't work without my strategy and experience. I can't work, in today's technology environment, without its knowledge.

Oh, just in case it wasn't obvious, the banner image is AI generated. While you might think I took that picture in real life, the flag has 15 stripes so that's the most obvious hint that it's AI generated. But, I mean, come on. Ain't that like totally patriotic?

A little footnote for the coffee mug problem. I know some might suggest that in 2026 the problem identified in my "Coffee Mug Problem" section is rapidly being solved. While I agree this problem is being solved and will end up being solved, it's not solved yet.

One model that seems to be doing well at making progress is Google's Imagen. The image above is from Google Gemini. While you can see that it's done a better job at being more physics aware, it still has a variety of issues. If it was syrup instead of coffee, it might be a little more believable.

About Corey Smith

Ready to simplify and succeed? Let’s make it happen—because your business deserves practical, no-nonsense wins. Find me on LinkedIn.